FOR some time, weather enthusiasts across Australia have been noticing rapid temperature fluctuations at the ‘latest observations’ page at the Australian Bureau of Meteorology’s website. For example, Peter Cornish, a retired hydrologist, wrote to the Bureau on 17 December 2012 asking whether the 1.5 degrees Celsius drop in temperature in the space of one minute at Sydney’s Observatory Hill could be a quirk of the new electronic temperature sensors. Ken Stewart, a retired school principal, requested temperature data for Hervey Bay after noticing a 2.1 degrees Celsius temperature change in the space of one minute on 22 February 2017.

So, begins my article to be published later today at The Spectator online, perhaps to be entitled ‘More Hot Days Caused by Purpose-Designed Temperature Sensors’. [That article was eventually published at both The Spectator and WUWT.]

But if you read a bit beyond the headline you will see that my issue is not so much with the temperature sensors as the way in which the Bureau is not averaging according to calibration. In particular, and to paraphrase some more from the article…

Beginning some twenty years ago, electronic sensors have progressively replaced mercury thermometers in weather stations across Australia. The sensors can respond much more quickly to changes in temperature, and on a hot day, the air is warmed by turbulent streams of ground-heated air that can fluctuate by more than 2 degrees on a scale of seconds. So, if the Bureau simply changed from mercury thermometers to electronic sensors, it could increase the daily range of temperatures, and potentially even generate record hot days simply because of the faster response time of the sensors.

Except to ensure consistency with measurements from mercury thermometers there is an international literature, and international standards, that specify how spot-readings from sensors need to be averaged – a literature and methodology being ignored by the Bureau.

To be clear, the UK Met office takes 60 x 1 second samples each minute from its sensors, and then averages these. In the US, they have decided this is too short a period, and the standard there is to average over a fixed 5-minute period. In Australia, however, the Bureau takes not five-minute averages, nor even one-minute averages, but just one second spot-readings.

Check temperatures at the ‘latest observations’ page at the Bureau’s website and you would assume the value had been averaged over perhaps 10 minutes. But it is dangerous to assume anything when it comes to our Bureau. The values listed at the ‘observations’ page actually represent the last second of the last minute. The daily maximum (which you can find at a different page) is the highest one-second reading for the previous 24-hour period: a spot one-second reading in contravention of every international standard. There is absolutely no averaging.

Then again, how many of you knew that the mean daily temperature as reported by meteorological offices around the world is not an average of temperatures recorded through the day but rather the highest and the lowest divided by two – as is the convention.

This convention developed because (surface) temperature measurements were originally instantaneous measurements from mercury thermometers recorded manually each morning (providing the minima) and afternoon (providing the maxima).

So, in the UK the daily maximum from a weather station with an electronic sensor will be the highest value derived from the averaging of 60 samples over that one minute interval, while in Australia, the daily maximum will be the highest one-second spot reading.

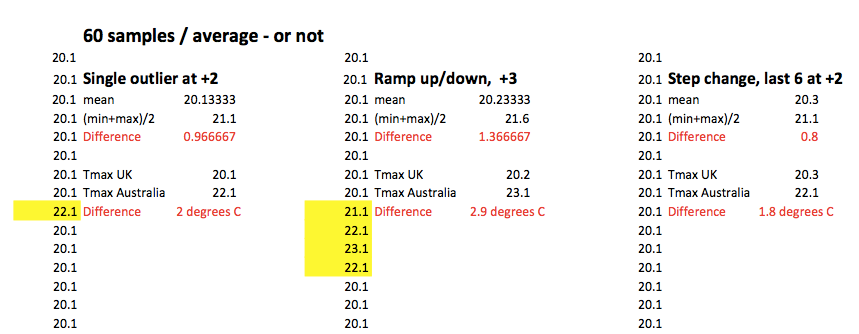

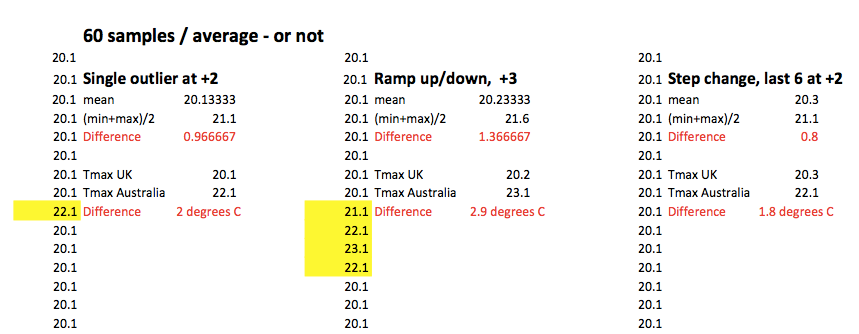

And, the method for averaging from the sensors does matter, as shown in the attached spreadsheet using synthetic values to illustrate this point, and summarized in Figure 1.

The values shown in the three-worked example fall well within the general range of variation possible within a one-minute interval considering highest, lowest and last second values as shown in some of the datasets purchased by Ken Stewart from the Bureau earlier this year.

In the first example, which could be symptomatic of ‘sensor noise’, there is a single outlier of 22.1 in the 60 one-second readings from the sensor. If these are averaged, as is done by the UK Met office, then the recorded temperature measurement for that minute is 20.1 degrees Celsius. If, however, the highest one-second value is recorded, which is the method applied in Australia, the recorded temperature would be 22.1 degrees Celsius. There is a whole 2 degrees of difference. If we apply the meteorological convention for generating mean daily values, then the difference is 1 degrees Celsius (0.9666 rounded).

In the second example, which could reflect a wind direction change, or jet plane exhaust, the difference between the UK Met office method of averaging over 1 minute versus the Australian method of taking a one second spot reading is the rather large 2.9 degrees Celsius.

In the third example, where there is a step change, the difference between the UK and Australian methods for treatment of sub-minute readings is 1.8 degrees Celsius.

More recently the Bureau have attempted to suggest yet another method of averaging, as detailed in their Fast Facts. But this is really just obfuscation, in more recent correspondence with me CEO Andrew Johnson has used the correct term when calculating how long it takes a sensor to adjust to a step change in temperature, which is ‘time constant’.

*****

The spreadsheet detailing the different averaging methods can be downloaded here: Averaging-NF-JM

I am blessed to be part of an Alt-Met network that includes Kneel (who sent me a first version of this spread sheet), Ken Stewart, Lance Pidgeon, Phill and others… thanks for the conversations.

Jennifer Marohasy BSc PhD is a critical thinker with expertise in the scientific method.

Jennifer Marohasy BSc PhD is a critical thinker with expertise in the scientific method.

The second of two ALMOS automatic internal quality control checks means that the high value would need to be repeated for a second second to get the weirdest outlier of the day award (not being deleted) without disqualification by the automatic randomisation referee. 4.2.4.1 “The rate test checks that the difference between the current measurement and the previous one is no greater than 0.4°C. If the difference is greater, the measurement is replaced with a marker denoting that the current value is unavailable.” http://www.bom.gov.au/inside/Review_of_Bureau_of_Meteorology_Automatic_Weather_Stations.pdf

Lance Pidgeon

This really nails it. The BoM has gone with the method which produces the hottest result. This combined with their proven track record of setting limits on LOW/MINIMUM temperatures shows the BoM has taken an activist position on temperature.

The only constant thing about a time constant is the shape of the decay curve. In this video a glass thermometer is taken from ice water and dunked into boiling water. A big difference between the no moving parts version of a time constant from a Platinum resistance thermometer device and the moving liquid in the glass thermometer is that shape of rate vs time. The glass expands before the liquid so the curve is distorted by this short term negative feedback of the bore expansion counteracting the slower liquid expansion. There is no glass to cause this with the PRTD so it can far better produce spikes. Watch the liquid accelerate then decelerate https://www.youtube.com/watch?v=xOZvfdfl33c

The piece to go with this may not run in The Spectator until Thursday.

Thanks Jen, and thanks to all the contributors mentioned for their super-sleuthing.

There are two distinct issues, and it bemuses me that they are being merged into one. This plays into the BOM’s preferred home-ground advantage – that the topic is complex, and only they are the experts.

The first issue is the nature of the new, super-sensitive sensors being used by the BOM, and the need to highlight their methodology whereby “every sensitive, one-second temperature reading can win the max-temperature prize for the day.

Just imagine… it’s a warm day and you are wandering across the local footy oval. All of a sudden, you feel a slight gust of much warmer air that lasts no more than 3 seconds, and yet you feel the temperature increase on your body… and then it’s gone again. Should THIS momentary temperature increase that you felt be recorded as the maximum temperature for the day for that city? Really, BOM? Really? We have to make the average person understand the rank stupidity of the BOM’s decision to do this for the last 20 years, but not for the preceding 100 years with glass mercury thermometers.

The way to do this is not through a spreadsheet of numbers and calculations, but through a line-graph. The graph shows one hour of one-second readings for an afternoon where the temperature is hovering around a max of 22 degrees, with variations of 0.2 degrees either side. In the middle of the line graph is a 3-second spike to 23.5 degrees that visually stands out like a sore thumb against the line-graph showing 22-ish degrees either side of it. And this brief spike wins the maximum temperature for the day. All people will instantly understand the stupidity of this.

The second issue is to do with the solution, and it should include discussions of numbers, spreadsheets, time periods, and averages. A great proportion of the population turns off when this second issue is discussed. They will naturally leave it to the “experts” to work it out.

And this is why the two issues need to be described and illustrated SEPARATELY if we want to convince the general public of the existence of a major problem.

I despair whenever I read that wonderful Graham Lloyd fellow in The Australian writing about the BOM as one big technical issue involving sensors, averaging, WMO standards, etc. What will crystallise the problem in the public’s mind is this – publish a line-graph showing 2 lines:

(a) the 3-second temperature-spike problem described above, and

(b) what a glass thermometer would have reported for the 100 years prior to sensors.

The glass-thermometer line would hardly show the 0.2 degree variations either side of 22 degrees but would definitely NOT show the 3-second spike.

People will instantly get it that the BOM maximum for each day is now a nonsense, and will begin to understand why we keep having “the hottest ever” records every few months.

Best of luck in your endeavours Jen,

Ron

Ok, my wife has been commenting why there can be so much of a difference in what our barometer, etc, shows versus what is announced over the radio. I find this article very interesting as it has a considerable explanation. Thanks for this

Thanks Crowbar. I’ve put in various request for such parallel data so I would be able to illustrate as much with real data… so far nothing has been forthcoming.

Incredibly after 20+ years there is no such data to be found anywhere.

More recently I’ve put in a request to buy some of their ‘custom-built’ sensors (more about these in the article to be published by The Spectator) and some of their old mercurty thermometers surplus to demand.

We are perhaps going to have to setup our own network of weather stations to get this data.

Hello, Jennifer, from Brooklyn, NY.

I was just wondering what the reaction is down there to your recent columns. Is there a public uproar? Is any action being taken against the people that instituted these fraudulent practices in the Bureau of Meteorology, and is any action being taken to correct those practices?

Thanks again for your valuable work.

William

The BoM does good work and I despise those who have an agenda of climate change skepticism trying to destroy trust in the Bureau!

Hello again Jen,

Thanks for your response.

I’m curious… is the BOM saying that they can’t even give you e.g. yesterday’s one-second readings for the full 24 hours for one station?

We have to be able to see the scope/size of these sensitive spikes. I also have a hunch that spikes near the overnight minimum will be less frequent and less severe than spikes near the daytime maximum, but I could be wrong.

Without the one-second data, we have no idea.

William Taylor,

There is no public uproar because most of the public don’t know, or understand – about this issue of spot-readings, with no averaging. There has been very limited reporting in the one national newspaper, and nothing in any other media.

I’m expecting The Spectator Online to publish my column really explaining everything that I can within 1,000 words tomorrow. This column includes comment: “I don’t believe in conspiracies of silence except when it comes to Harvey Weinstein and the Australian Bureau of Meteorology.”

Cowbar,

You are correct: ‘they can’t even give me yesterday’s one-second readings for the full 24 hours for one station’.

Another one Jennifer:

The metadata equipment list for Alice Springs Airport says that had both an electric probe and liquid in glass thermometers for the period 21st March 1991 until the glass thermometers were removed on 28th April 2016. The probe became the primary instrument on 1st November 1996. The period here is just over 25 years. Was no parallel data kept? Has it all been lost?

No need for fancy averages and “corrections”. Just glue the sensor to an ounce chunk of glass…. the thermal inertia will do the rest.

I think it very clear we “the sceptic community” need to set up a station on each continent outfitted with one of each historical and current sensor, then publish the actual raw data along with the nearest “official” station. Just comparing the graphs would enlighten many, scare some, and P.O. a lot…

Well done Jennifer,

I would like to see an audit on how the one million dollars a day is used. Past and present records shouldn’t be that hard to pass on to anyone doing some research.

There may also be a problem with rain gauges as well, Jennifer.

Over the past four days in Casino, there has been quite a lot of rain, possibly around 100mm.

Yet the AWS has recorded just over 10mm (nearby Lismore has had 125mm).

http://www.weatherzone.com.au/nsw/northern-rivers/casino

When you check the BoM records, you note that the totals go up only 0.2mm each time.

http://www.bom.gov.au/products/IDN60801/IDN60801.94573.shtml

(This record is an ongoing record and will not be available soon).

If there was a problem with the gauge then it should show a ‘-‘.

Otherwise it will be viewed as a true reading, giving a false impression for the month.

I have also noticed differences in the Gladstone and Gold Coast records.

Crowbar: re “I also have a hunch that spikes near the overnight minimum will be less frequent and less severe than spikes near the daytime maximum,” You are correct. Rapid large fluctuations occur during daylight hours. Unless there are unusual weather conditions, after sundown and before sunrise the fluctuations are small- often +/- 0.1 or 0.2, (and AWS uncertainty is +/- 0.2C). See my post at

https://kenskingdom.wordpress.com/2017/10/15/summer-temperatures-in-south-central-queensland-part-1-diurnal-patterns-of-temperature-change/

Ken Stewart

That there’s no uproar is infuriating even from 17,000 miles away. If you can, please let us know what the reaction to your column is.

Thanks,

William

Thank you Ken. Awesome analysis in your blog article.

So you were able to purchase 1-minute readings but not 1-second readings?

I just feel it in my bones… a graph of 1-second readings for an hour either side of a daily maximum would blow the lid off this issue with the BOM.

PS The comment by T.Smit above is missing either the /sarc tag or #istandwithauthorityfigures

“Science is the belief in the fallibility of experts” – Richard Feynman.

Never stop questioning… especially, especially when bureaucrats circle the wagons.

William

The article about ‘conspiracies of silence’ has now been published at both The Spectator online and also at WUWT.

The readers of WUWT ‘get it’, some brilliant comments following… https://wattsupwiththat.com/2017/10/19/in-australia-faulty-bom-temperature-sensors-contribute-to-hottest-year-ever/

Anthony Watts has provided me with some very useful information off-line, and I’ve been contacted by a coupe of WUWT readers with inside information… watch this space.

Best regards,

Let’s hope you’ve got some killer leads Jen.

Thanks again for fighting the good fight.

Excellent, most excellent work, Jennifer.

It is unfortunate that the SOP at the BOM tosses away readings.We get better results if the data are recorded, then transmitted and saved centrally. With the appropriate compression methods, its not an unmanageable quantity of data.

If nothing can be done about the ‘tossed’ data, then the next best thing (in terms of approximating the actual temperature) is to develop an algorithm that takes the data that ARE saved, (the min, max and last) seconds of each minute, and treats them something like the sliding-window averages that are used for Arctic sea ice. Even a 10-minute average of the mins, a 10-min average of the max and a 10-min average of the last would give a sense of the trend for that time period of the 3 values. Yes, it would be a homogenized trio of values, but it would set the range for the min and max for the sliding window of time, and show trends that would call out the outliers, while also showing the effect of squall line fronts, the beginning of a snow storm, strong rain outflow, etc.

All the best.

My wife and I tend to ‘peek’ at our iPads many times during the day and living in the Blue Mountains (about three kilometres from the Mt Boyce weather station, perched on the escarpment at just over 1000m and directly above the Megalong Valley) we check the weather (from Oz Weather) very frequently. It always used to puzzle us that we would observe that the max temperature being shown was nowhere near the forecast maximum and yet, at the end of th day there it was – the maximum temperature was close to the forecast. It is now clear what is happening. It is very common up here, on warm days, to get bursts of warm air from the valley almost 300m below and about 2 degrees warmer, coming up the escarpment in the afternoon. One can actually feel them if walking along the escarpment top. This obviously is what is providing the short term spike which is recorded as “the hottest evaaah”. Thanks Jennifer and team.

Thanks for your work JM.

We also have rain gauge problems at our local BOM (Geelong Racetrack).

On numerous occasions we have have biggish falls ( > 15 mm ) that are recorded as 0 mm or significantly less than what actually occurred. I used to write to BOM who advised the mechanism may not be optimally located.

The problem is compounded because we often have long spells without any rain (which is what BOM tell me cause the problem with the gauge) and then 10+ mm a day for several days contiguously – the whole event can be missed before they get round to fixing it. I can’t be bothered contacting BOM anymore, but they are well aware that it is a recurring problem spanning many years. Some of the events that I contacted them over represented > 10% of annual rainfall *each* – BOM did not seem at all concerned…

Thanks, Jen, for this much-needed beam of light.

What a wonderful tool these AWSs are to generate the necessary warmth.

Just think of the money that will flow from the private sector to the public sector as a result.

What’s not to like?

Just ask T Smit above.

The fakery at the bakery is in robust health.

EM Smith. That idea of comparable readings sounds good to me. Ian George, I agree with you about rainfall readings. Unless the local airport is in a rain shadow.

Jennifer you deserve a medal for this work. This is the most appalling scientific fraud I have ever heard about and i cannot believe our media will not run with it. Don’t stop.

My bet is that every other allegedly world class meteorological organization shares in tbis lack of integrity.